Join leaders in Boston on March 27 for an exclusive night of networking, insights, and conversation. Request an invite here.

For businesses seeking to deploy AI models in their operations — either for employees or customers to use — one of the most critical questions isn’t even what model or what to use it for, but when their chosen model is safe to deploy.

How much testing on the backend is necessary? What kinds of tests should be run? After all, most companies would presumably like to avoid the kind of embarrassing (yet humorous) mishaps we’ve seen with some car dealerships using ChatGPT for customer support, only to find users tricking them into agreeing to sell cars for $1.

Knowing just how to test models, and especially fine-tuned versions of AI models, could be the difference between a successful deployment and one that falls flat on its face and costs the company its reputation, and financially. Kolena, a three-year-old startup based in San Francisco co-founded by a former Amazon senior engineering manager, today announced the wide release of its AI Quality Platform, a web application designed to “enable rapid, accurate testing and validation of AI systems.”

This includes monitoring “data quality, model testing and A/B testing, as well as monitoring for data drift and model degradation over time.” It also offers debugging.

“We decided to solve this problem to unlock AI adoption in enterprises,” said Mohamed Elgendy, Kolena’s co-founder and CEO, in an exclusive video chat interview with Venturebeat.

Elgendy got a firsthand look at the problems enterprises face when trying to test and deploy AI, having worked previously VP of engineering of the AI platform at Japanese e-commerce giant Rakuten, as well as head of engineering at machine learning-driven x-ray machine threat detector Synapse, and a senior engineering manager at Amazon.

How Kolena’s AI Quality Platform works

Kolena’s solution is designed to support software developers and IT personnel in building safe, reliable, and fair AI systems for real-world use cases.

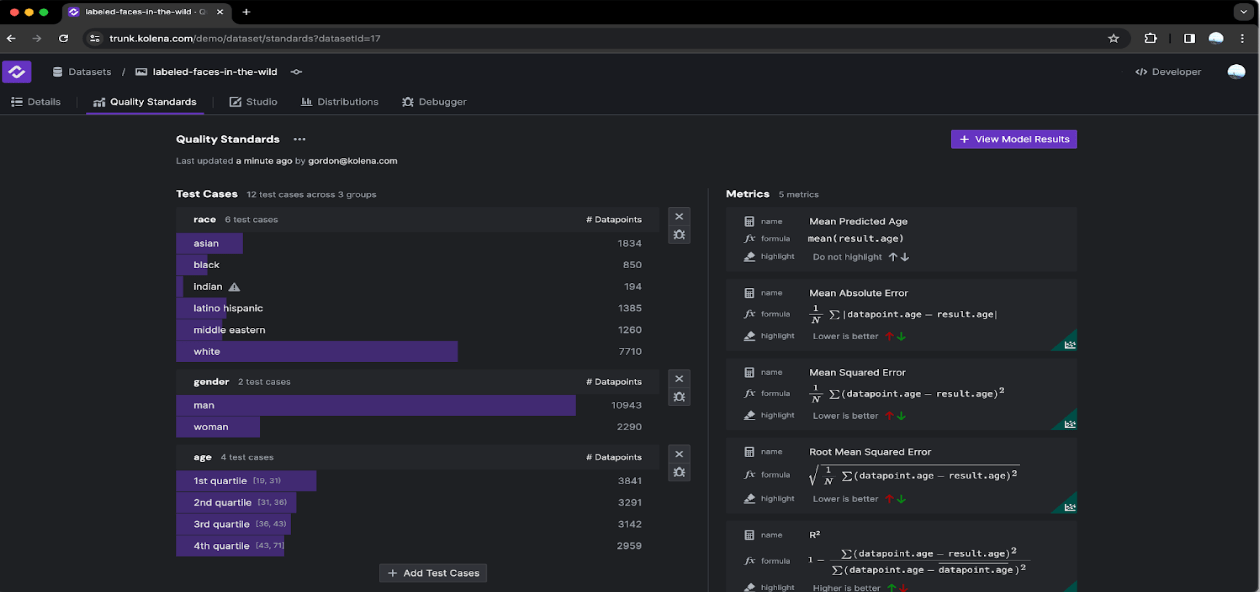

By enabling rapid development of detailed test cases from datasets, it facilitates close scrutiny of AI/ML models in scenarios they will face in the real world, moving beyond aggregate statistical metrics that can obscure a model’s performance on critical tasks.

Each customer of Kolena hooks up the model they want to use to its API, and provides the customer’s own dataset for their AI and set of “functional requirements” for how they want their model to operate when deployed, whether that’s manipulating text, imagery, code, audio or other content.

Also, each customer can decide to measure for attributes such as bias and diversity of age, race, ethnicity, and lists of dozens of metrics. Kolena will run tests on the model simulating hundreds or thousands of interactions to see if the model produces undesirable results, and if so, how often, and under what circumstances or conditions.

It also re-tests models after they have been updated, trained, retrained, fine-tuned, or changed by the provider or customer, and in usage and deployment.

“It will run tests and tell you exactly where your model has degraded,” Elgendy said. “Kolena takes the guessing part out of the equation, and turns it into a true engineering discipline like software.”

The ability to test AI systems isn’t just useful for enterprises, but for AI model provider companies themselves. Elgendy noted that Google’s Gemini, recently the subject of controversy for generating racially confused and inaccurate imagery, might have been able to benefit from his company’s AI Quality Platform testing prior to deployment.

Two years of closed beta testing with Fortune 500 companies, startups

True to its aspirations, Kolena isn’t releasing its AI Quality Platform without its own extensive testing of how well it works at testing other AI models.

The company has been offering the platform in a closed beta to customers over the last 24 months and refining it based on their use cases, needs, and feedback.

“We intentionally worked with a select set of customers that helped us define the list of unknowns, and unknown-unknowns,” said Elgendy.

Among those customers are startups, Fortune 500 companies, government agencies and AI standardization institutes. Elgendy explained.

Already, combined, this set of closed beta customers has run “tens of thousands” of tests on AI models through Kolena’s platform.

Going forward, Elgendy said that Kolena was pursuing customers across three categories: 1. “builders” of AI foundation models 2. buyers in tech 3. buyers outside of tech — Elgendy stated one company that Kolena was working with provided a large language model (LLM) solution that could hook up to fast food drive-throughs and take orders. Another target market: autonomous vehicle builders.

Kolena’s AI Quality Platform is priced according to a software-as-a-service (SaaS) model, with three tiers of escalating prices designed to track along a company’s growth with AI, from starting with examining their data quality to training a model to finally, deploying it.

VentureBeat’s mission is to be a digital town square for technical decision-makers to gain knowledge about transformative enterprise technology and transact. Discover our Briefings.